The Web is Still Small After More Than a Decade - A Revisit Study of Web Co-location

Abstract

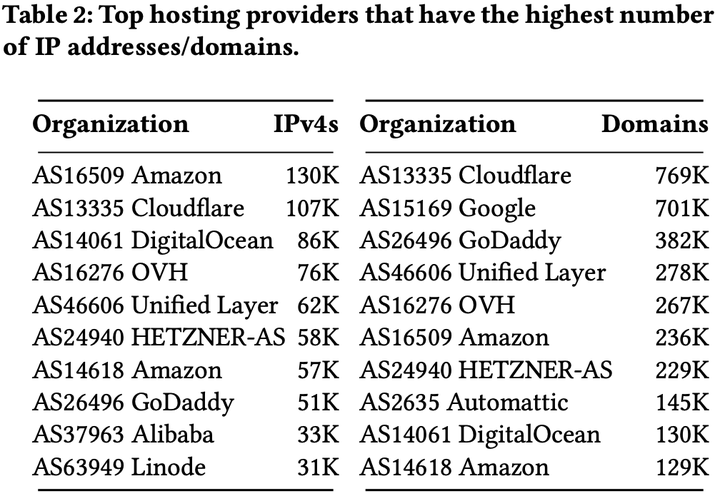

Understanding web co-location is essential for various reasons. For instance, it can help one to assess the collateral damage that denial-of-service attacks or IP-based blocking can cause to the availability of co-located web sites. However, it has been more than a decade since the first study was conducted in 2007. The Internet infrastructure has changed drastically since then, thus necessitating a renewed study to comprehend the nature of web co-location. In this paper, we conduct an empirical study to revisit web co-location using datasets collected from active DNS measurements. Our results show that the web is still small and centralized to a handful of hosting providers. More specifically, we find that more than 60% of web sites are co-located with at least ten other web sites. This group comprises of less popular web sites. In contrast, 17.5% of mostly popular web sites are served from their own servers. Although a high degree of web co-location could make co-hosted sites vulnerable to DoS attacks, our findings show that it is an increasing trend to co-host many web sites and serve them from well-provisioned content delivery network (CDN) of major providers that provide advanced DoS protection benefits. Regardless of the high degree of web co-location, our analyses of popular block lists indicate that IP-based blocking does not cause severe collateral damage as previously thought.

Dataset:

For this study, we ran a large-scale active DNS measurement across nine different countries, including Brazil, Germany, India, Japan, New Zealand, Singapore, United Kingdom, United States, and South Africa on a daily basis. In average, we resolve 8.6M domain names five times per day at each experimental location.

For the reproducibility of our work and assist future related studies, we have made our dataset publicly available via this Google Drive folder.

Each directory contains DNS data collect in each aforementioned country. We

compressed the data using zstd. To

decompress the data, you would need to install zstd and run the following

command. (Please be cautious because raw text files are quite large!)

zstd -d file.txt.zstd

Each line in the raw text files is stored in JSON format containing a DNS

response for a particular domain. For example, this is a DNS response for

google.com obtained from our experimental machine located in Brazil.

{

"class": "IN",

"data": {

"additionals": [],

"answers": [

{

"answer": "172.217.29.14",

"class": "IN",

"name": "google.com",

"ttl": 300,

"type": "A"

}

],

"authorities": [],

"flags": {

"authenticated": false,

"authoritative": true,

"checking_disabled": false,

"error_code": 0,

"opcode": 0,

"recursion_available": false,

"recursion_desired": false,

"response": true,

"truncated": false

},

"protocol": "udp"

},

"name": "google.com",

"status": "NOERROR",

"timestamp": "2019-07-27T01:07:33Z"

}

P/S: In order for us to improve future projects and provide insight into past uses of this dataset, we would really appreciate for citing our paper if you find any data provided here useful for your usage. Any questions or feedback about the dataset as well as our research paper are welcome to send to [email protected]